![]() © Authors

© Authors

From computer chess to self-driving automobiles, artificial intelligence is changing our day-to-day lives. A computer can learn to perform various heretofore human tasks by repeated attempts and learning from its mistakes, this is also called reinforcement learning (RL). We can consider the data processing steps in radio astronomy as rather abstract tasks as well. For any such data processing task, the optimal allocation of compute resources (such as the network and the GPUs) and the optimal settings for the hyperparameters still need to be determined by an experienced person.

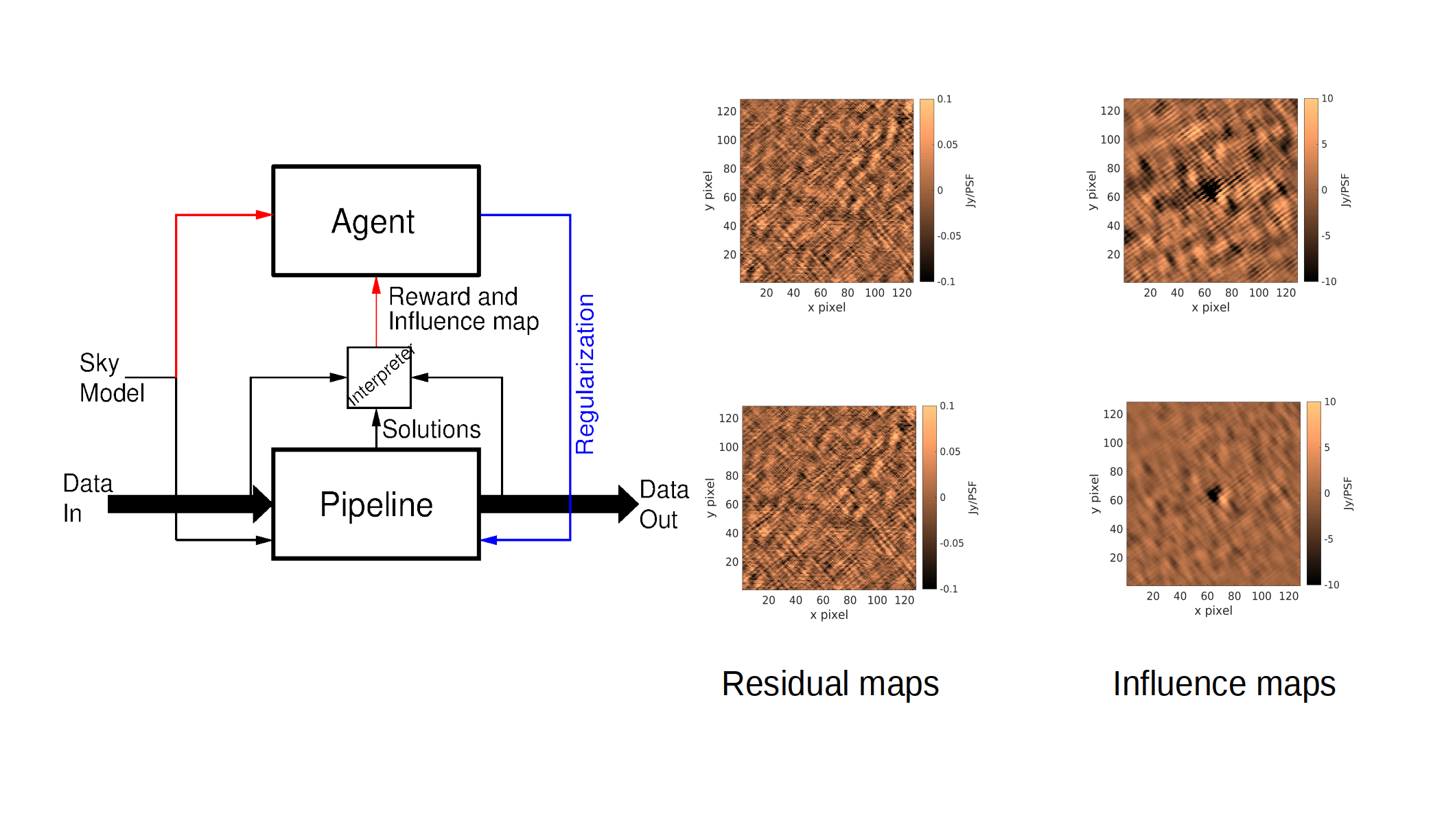

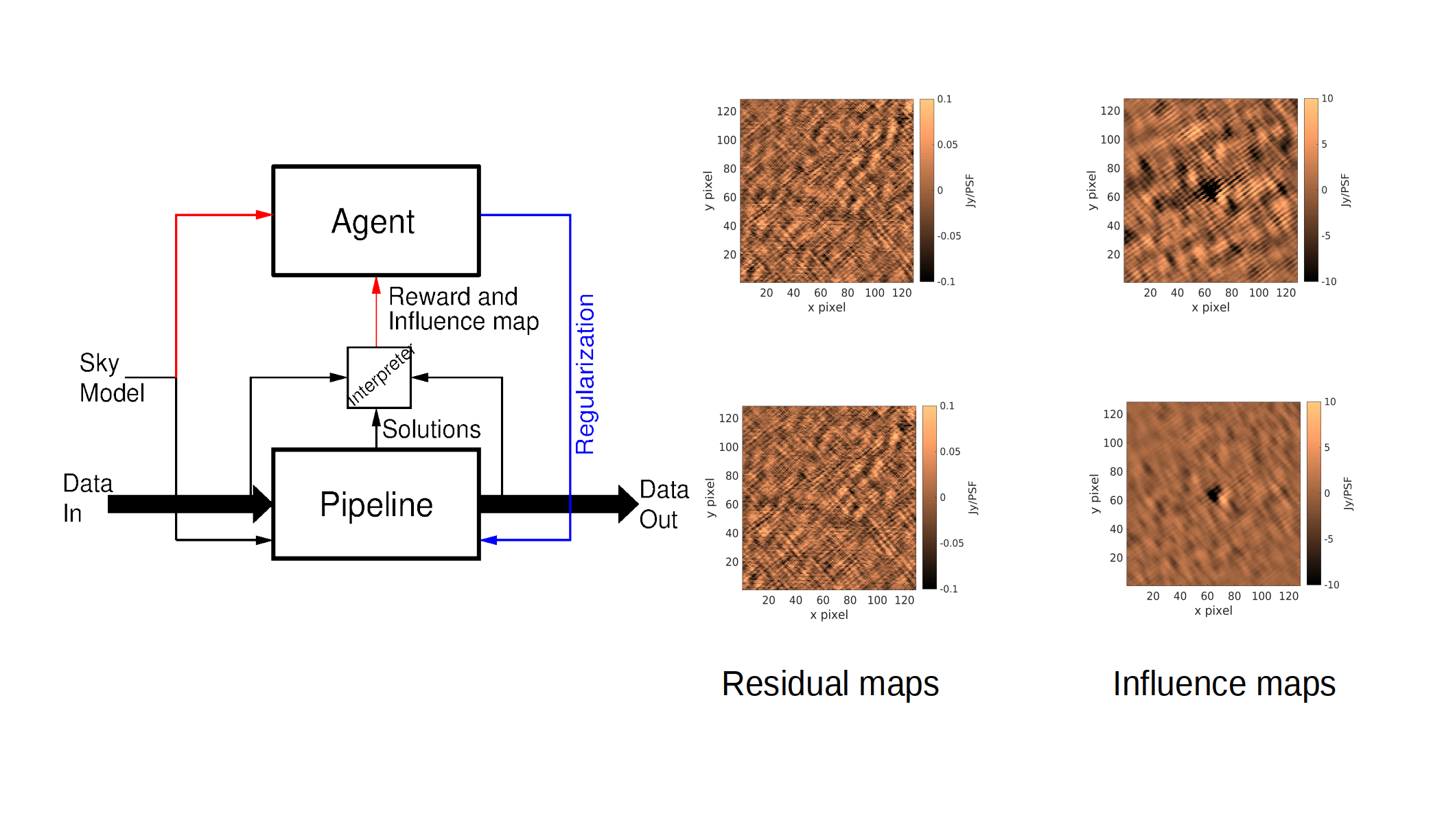

Our recent work has, for the first time ever, introduced the use of reinforcement learning into radio astronomical data processing. We can use an RL agent that is trained by reinforcement learning for automatic hyperparameter fine tuning, without the need for human intervention. The left panel shows the interactions of the agent with a data calibration pipeline. Crucial to this work is measuring the performance of the pipeline. We have introduced the use of

influence maps to measure the performance almost instantaneously. The right panel shows the maps of the residual data and their corresponding influence maps. With only a small amount of data, we can detect even subtle changes in the performance. Instead of waiting days for a pipeline to finish processing, we can use the influence map to feed the performance back to the RL agent in real time. This concept of using an RL agent with influence function is new, and have many more applications in any data processing task, going beyond radio astronomy.

© Authors

© Authors © Authors

© Authors